Research in the humanities remains fundamentally text-based, especially when pioneering new fields or topics. I vividly recall my initial struggle with media theory texts as an inexperienced engineer beginning his Master’s. Additionally, I identify as a “visual learner” – typically finding it challenging to fully grasp content presented solely in text. I rely on graphics or visual elements to structure and format the text for me. (Footnote: The existence of such distinct learning types is still subject of an ongoing academic debate, which I won’t delve into here. However, from my anecdotal and unscientific self-observation, I find that mind maps, infographics, and images greatly aid my text comprehension.)

The following project aims to automate this pre-formatting using Natural Language Processing (NLP) and subsequent visual processing. The explicit goal is not to replace the process of reading, but to provide readers with an accessible entry point into the text. This project focuses specifically on typical media studies texts from the German-speaking humanities, a field typically not addressed by major players in machine learning.

Important Note

I consider these descriptions here as a work in progress, akin to a digital garden that documents my experiments like a lab journal. Therefore, it deliberately includes potential missteps, dead ends, or incorrect assumptions to make my research process transparent.

First proof of concept

For my first “proof of concept,” I chose a classic media theory text: Walter Benjamin’s Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit (“The Work of Art in the Age of Mechanical Reproduction”),1 written around 1935 with approximately 62,000 characters, analyzed using the Python tool spaCy. The model de_core_news_lg was employed for an initial experiment.

To see results quickly, I used spaCy to search the text for all nouns (NOUN) and catalog them in a Python dictionary. An insight: It’s not worthwhile to also output “Proper Nouns” (PROPN),2 as the model struggles with old German orthography, often misclassifying words it can’t categorize (e.g., “daß” or “bewußt”).

# Extract entities and nouns

terms = [token.text for token in doc if token.pos_ in ['NOUN']]Currently, I’m trying to relate these nouns by creating a co-occurrence map using a Python dictionary. If the co-occurrence exceeds a threshold of 1, the script assumes a relationship between two nouns, which is later considered in the visualization process.

Cleanup

The model also assumes that roman numerals (›VI‹), elipses (›…‹) and square brackets (›[123]‹) are nouns. Therefore I apply some regex (using Python’s built in re) to clean up the text and get rid of these letter combinations before applying the NLP:

# get rid of roman numerals (seem to confuse spaCy)

text = re.sub(r'(?=\b[MCDXLVI]{1,6}\b)M{0,4}(?:CM|CD|D?C{0,3})(?:XC|XL|L?X{0,3})(?:IX|IV|V?I{0,3})', ' ', text)

# get rid of citations and converted footnotes in the style of: test.‹[125]

text = re.sub(r'\[\d*]', ' ', text)

# get rid of elipsis as …

text = re.sub(r'…', ' ', text)

# get rid of newlines

text = re.sub(r'\n', ' ', text)

# get rid of two or more consecutive whitespaces & replace with single whitespace

text = re.sub(r'[\s\t]{2,}', ' ', text)Visualization

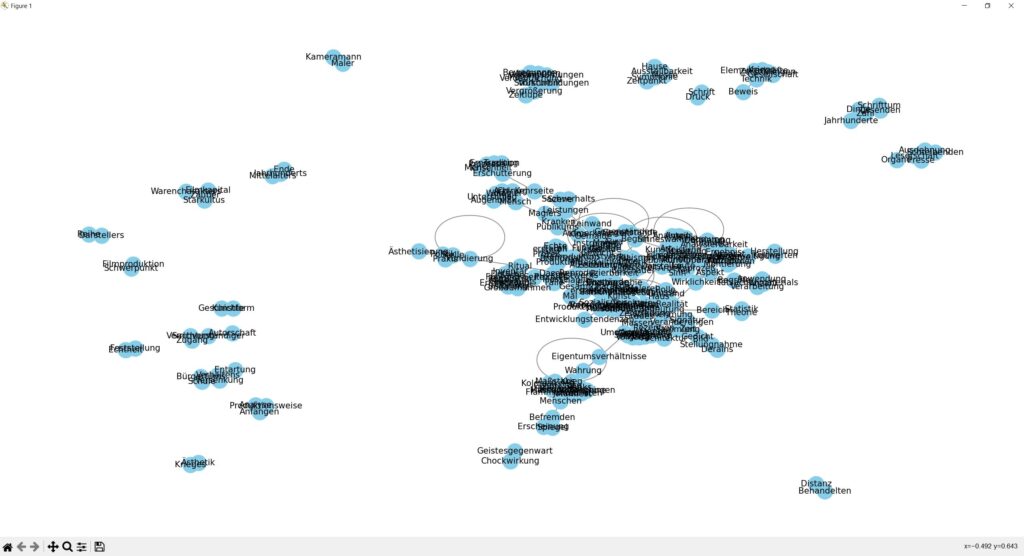

For visualization, I currently use the Python library networkx to create a network diagram from the Python dictionary. Without further configuration, the graphic is not particularly useful:

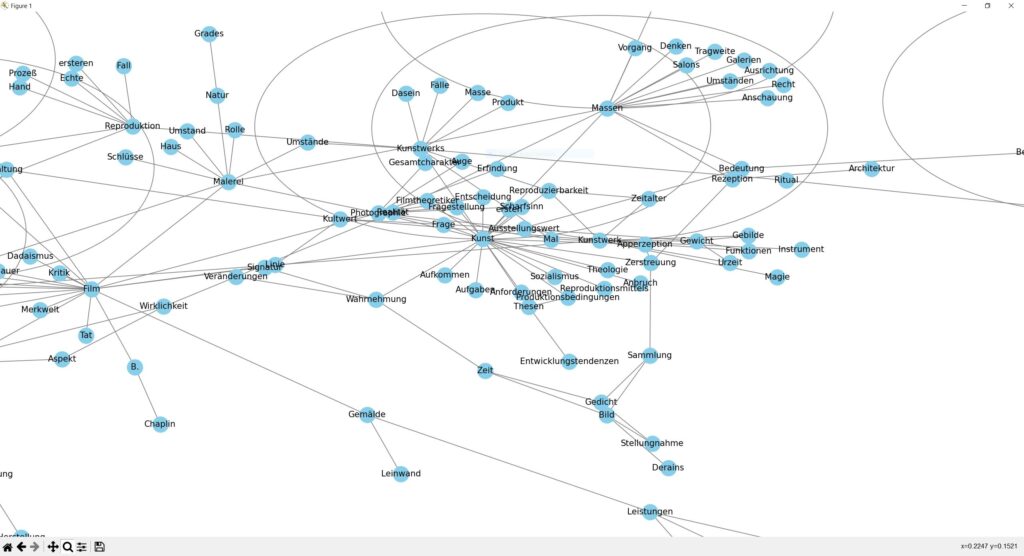

However, zooming in reveals interesting results:

HTML and Interaction

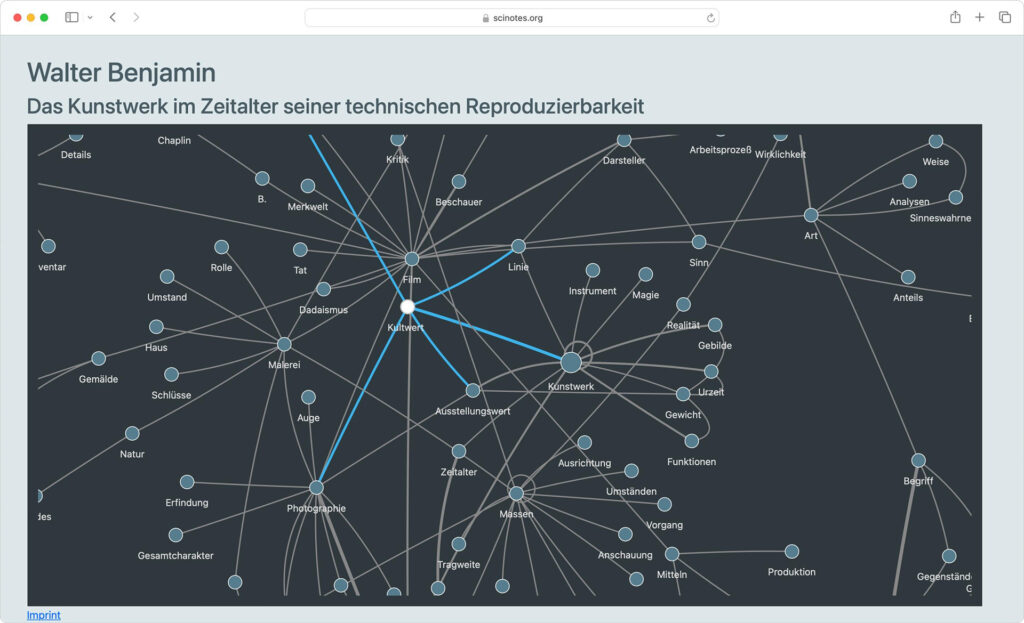

Insight: A toolset is needed for more convenient zooming and interaction. The Python preview is adequate but somewhat clunky. Therefore, I use the Python library pyvis to create a HTML/CSS/JS-compatible webpage. With some styling and manual tweaking, it looks quite sophisticated:

Those interested can experiment with a demo at https://scinotes.org/benjamin. Please be patient: Network generation takes about 15 seconds, depending on your device.

TODOs

- Currently, the NLP runs entirely on the CPU. With a switch to a new model, it should ideally be transferred to GPU using CUDA.

- Currently, the process extracts ›just‹ nouns from the text, which yields surprisingly good results for Benjamin’s text, but can be significantly improved. In the medium term, the

de_core_news_lgshould be enhanced with a model specifically optimized for typical concepts in German media studies.

Learnings

- The standard models

de_core_news_smand andde_dep_news_trfyield significantly worse results in regard to accuracy – i.e. they get often trapped with unusal composita and non-German words. - Although the models are trained on news and not on scientific texts the results look quite promising. However I have to look into the training process a lot more if fine tuning these models with scientific concepts produces meaningful outputs.

Different Approach using a custom GPT

I have spent some time working on creating my own GPT using ChatGPT. After some prompting, this process leads to very good results. So far, I have only used pure (and cleaned) text in UTF-8 txt format – so it is possible that PDFs or less cleanly processed files might not yield equally good output. The custom GPT can be found here (requires a ChatGPT account): https://chat.openai.com/g/g-UemTndd5t-project-benjamin

When creating and revising the GPT, I noticed that some concepts are repeatedly ‘forgotten’. That is, even though the GPT was already instructed to perform certain actions (or avoid certain ouputs), these are gradually neglected if the GPT is revised in the meantime.

After approx. 50 refining prompts my custom-GPT yields this result from Benjamin’s The Work of Art in the Age of Mechanical Reproduction if asked to extract the main ideas from the text:

Technologische Reproduzierbarkeit der Kunst: Dieser Aspekt bildet die Grundlage für die nachfolgenden Punkte. Die Fähigkeit, Kunstwerke mechanisch zu reproduzieren, entzieht ihnen ihre Einzigartigkeit und Unmittelbarkeit, was weitreichende Konsequenzen für ihre Wahrnehmung und Rolle in der Gesellschaft hat. Die Verbindung zu den anderen Aspekten ist stark, da sie den Ausgangspunkt für die Veränderungen im Kunstbereich darstellt.

Verlust der Aura: Eng verbunden mit der technologischen Reproduzierbarkeit, beschreibt der Verlust der Aura die Reduzierung der Einzigartigkeit und der authentischen Erfahrung von Kunst. Diese Idee ist zentral und stark verbunden mit den anderen Aspekten, da der Verlust der Aura direkt aus der technologischen Reproduzierbarkeit resultiert und grundlegende Veränderungen in der Kunstwahrnehmung und -wertschätzung bewirkt.

Demokratisierung des Kunstzugangs: Dieser Aspekt beschreibt die durch technologische Reproduzierbarkeit ermöglichte breitere Verfügbarkeit und Zugänglichkeit von Kunstwerken für ein größeres Publikum. Die Verbindung zu den anderen Ideen ist bedeutend, weil die Demokratisierung die sozialen und kulturellen Funktionen von Kunst verändert und neue Formen der Kunstbetrachtung und -interaktion fördert.

Wandel der kulturellen und politischen Rollen der Kunst: Die Veränderungen in der Art und Weise, wie Kunst produziert, verbreitet und rezipiert wird, führen zu einer Neubewertung ihrer Funktionen in der Gesellschaft. Dieser Aspekt ist stark mit dem Verlust der Aura und der Demokratisierung des Kunstzugangs verbunden, da diese Entwicklungen die Voraussetzungen für den Wandel der Rollen von Kunst schaffen.

Wandel von individueller zu kollektiver Kunstbetrachtung: Die Art und Weise, wie Kunst erfahren wird, verschiebt sich von einer individuellen, kontemplativen Betrachtung hin zu einer kollektiven und teilweise abgelenkten Rezeption, insbesondere durch die Präsenz von Kunst in Massenmedien wie Film. Diese Idee ist eng mit dem Wandel der kulturellen und politischen Rollen der Kunst verbunden, da sie eine direkte Folge der veränderten Funktion und Zugänglichkeit von Kunst darstellt.I am very impressed by the quality of the output, but the workflow is not straightforward: Although the Custom-GPT was explicitly instructed to present the extracted concepts as bullet points (and to output the key aspects in bold), it still requires special prompting to really ensure this output. So, I will still spend some time with the post-correction of this GPT and try to achieve more consistent results.

What I definitely want to look into:

- The possibility of integrating one’s own files into the GPT itself. Is there a limit? – YES, there seems to be hard limit of 20 knowledge files at the moment. Knowledge files do not need any specific syntax, therefore it could be feasible to experiment with some different formats and contents.

- The possibility of extending the GPT via an API. (Maybe build a dedicated server that feeds GPT with certain information?)

Creating visualizations

Now that ChatGPT can also generate images in addition to text using DALL-E, I have advised my custom GPT that it should generate a network diagram of all the important aspects at the end. Of course, this does not lead to meaningful results at the moment.

However, what I can note is that the GPT is excellently suited for extracting the most important ideas and concepts from a text (This also works wonderfully with German theoretical texts) – therefore a hybrid workflow could be feasible: Use GPT for extracting information and use this output for creating a network diagram using Python’s networkx.

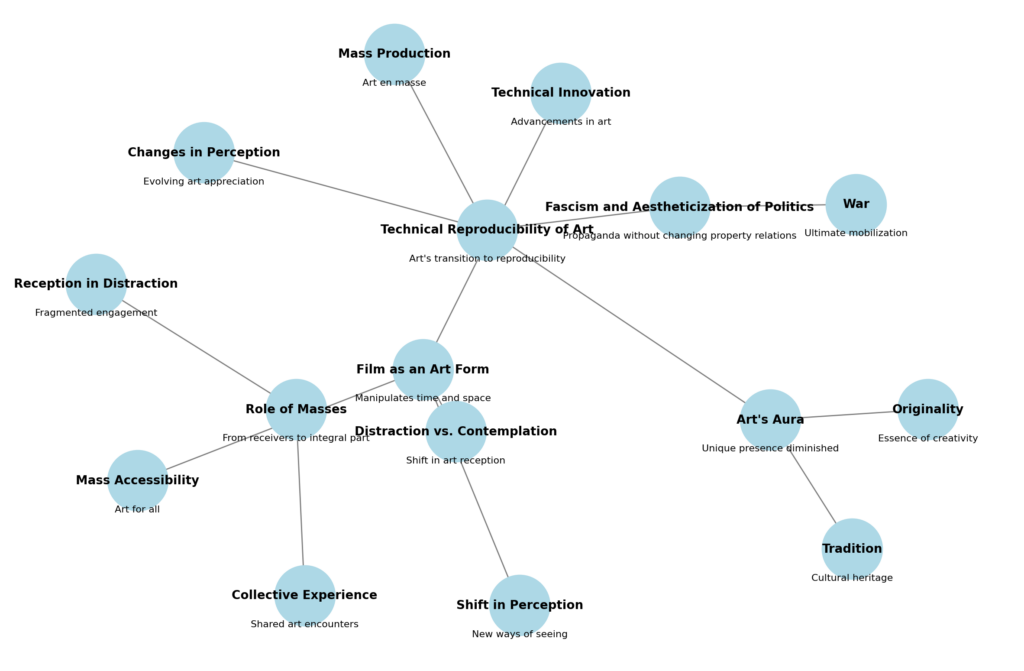

No in-between-steps: Creating Network Diagrams with ChatGPT

It came to my realization that neither a detour through DALL-E, nor a hybrid approach is actually necessary—ChatGPT can generate and execute Python code within the chat console itself. As such, it is possible to extract quite (!) useful network diagrams from the custom-GPT by simply adjusting the prompts accordingly: Instead of having DALL-E ‘conjure’ up a diagram, the GPT is straightforwardly prompted to generate the Python code needed to create a network diagram using networkx.

import networkx as nx

import matplotlib.pyplot as plt

# Create a directed graph

G = nx.DiGraph()

# Define nodes with their descriptions

nodes = [

"Technical Reproducibility of Art",

"Art's Aura",

"Role of Masses",

"Fascism and Aestheticization of Politics",

"Distraction vs. Contemplation",

"Film as an Art Form",

"Originality",

"Tradition",

"Mass Production",

"Changes in Perception",

"Reception in Distraction",

"Collective Experience",

"War",

"Technical Innovation",

"Mass Accessibility",

"Shift in Perception"

]

# Add nodes to the graph

G.add_nodes_from(nodes)

# Define edges between nodes to represent relationships

edges = [

("Technical Reproducibility of Art", "Art's Aura"),

("Technical Reproducibility of Art", "Mass Production"),

("Technical Reproducibility of Art", "Changes in Perception"),

("Art's Aura", "Originality"),

("Art's Aura", "Tradition"),

("Role of Masses", "Reception in Distraction"),

("Role of Masses", "Collective Experience"),

("Fascism and Aestheticization of Politics", "War"),

("Fascism and Aestheticization of Politics", "Technical Reproducibility of Art"),

("Distraction vs. Contemplation", "Film as an Art Form"),

("Film as an Art Form", "Technical Innovation"),

("Film as an Art Form", "Mass Accessibility"),

("Film as an Art Form", "Shift in Perception"),

]

# Add edges to the graph

G.add_edges_from(edges)

# Draw the network

plt.figure(figsize=(12, 8))

pos = nx.spring_layout(G) # positions for all nodes

nx.draw(G, pos, with_labels=True, node_size=2000, node_color="lightblue", font_size=10, font_weight="bold", edge_color="gray")

plt.title("Network Diagram of Key Concepts in Art's Technical Reproducibility", size=15)

plt.show()The twist: this generation also takes place within the chat, leaving behind a decidedly helpful diagram as output. In the case of Benjamin’s paper, the Custom-GPT thus creates the following image:

Different Approach: Comparing ChatGPT-4 and Locally Hosted Models

While the results from ChatGPT-4 have been extremely promising, reliance on a commercial service provider (and the necessity of an internet connection) presents a significant issue. For this reason, I am currently experimenting with models that can be installed locally and operate entirely using local resources. All tests were conducted on a Windows system equipped with an RTX 3080 (12GB VRAM) and 64GB of RAM, using the following prompt in German:

Schreibe eine Zusammenfassung zu Walter Benjamins “Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit”. Schreibe mindestens 10 Sätze in verständlichem aber trotzdem akademischen Deutsch.

In the initial test, I did not provide the models with any additional documents, meaning no Retrieval-Augmented Generation (RAG) or embeddings were utilized.

Test 1

- Model: EM German Mistral

- Parameters: 7 billion

- File size: 3.83 GB

- Language(s) (NLP): German

- Link: https://huggingface.co/jphme/em_german_mistral_v01

1. In seinem Essay "Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit" analysiert Walter Benjamin die Auswirkungen der fortschreitenden Technologie auf das Verhältnis zwischen Kunst und Kultur. Er befasst sich mit den Transformationen, die durch die Möglichkeit der Massenproduktion von künstlerischen Werken entstehen und betont dabei die zunehmende Entmystifizierung des Kunsterlebnisses. 2. Benjamin stellt fest, dass das traditionelle Konzept eines einzigartigen Kunstwerks, das exklusiv in einem speziellen Raum aufbewahrt wird und für einen begrenzten Kreis von Besuchern zugänglich ist, durch die technische Reproduzierbarkeit herausgefordert wird. 3. Mit der Möglichkeit, Kunstwerke zu reproduzieren und sie massenhaft verbreitet zu verteilen, entsteht ein neues Verhältnis zwischen dem Werk und seinem Publikum. 4. Die Trennung zwischen dem Original und den Kopien verschwindet, da jedes Reprodukt nun gleichwertig ist und kein Ehrenamt anerkannt wird. 5. Diese Entmystifizierung des Kunstwerks hat laut Benjamin dazu geführt, dass es zunehmend nur noch ein Mittel zur Veranschaulichung von Ideen oder zum Ausdruck von Standpunkten ist und weniger als autonome künstlerische Schöpfung gilt. 6. Er argumentiert, dass dies wiederum die Kultur des Kapitalismus fördert, da sie auf Massenproduktion und Standardisierung angewiesen ist. 7. Benjamin betont jedoch auch das Potenzial der technischen Reproduzierbarkeit für den demokratische Entwicklung der Kunst, indem es sie einem breiteren Publikum zugänglich macht und somit ihre Verbreitung fördert. 8. Trotz seiner Kritik an der technologischen Reproduzierbarkeit erkennt Benjamin auch ihren unvermeidlichen Einfluss auf die Kunstwelt des 20. Jahrhunderts an und weist damit einen wichtigen Impuls für zukünftige Diskussionen über das Verhältnis zwischen Technologie, Kultur und Kunstgattungen dar. 9. Insgesamt bietet Walter Benjamins "Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit" eine tiefgründige Analyse der Auswirkungen fortschreitender Technologien auf das kulturelle Gefüge und stellt damit einen bedeutenden Beitrag zur Diskussion über die Natur von Kunst und Kultur im modernen Zeitalter dar.

Test 2

- Model: GPT4All Falcon

- Parameters: 7 billion

- License: Apache-2

- Language(s) (NLP): English

- Link: https://huggingface.co/nomic-ai/gpt4all-falcon

Walter Benjamin's essay "The Work of Art in the Age of Mechanical Reproduction" is a seminal work in the field of art history and criticism. It was first published in 1936, but its ideas continue to resonate with contemporary audiences. In this essay, Benjamin argues that the technical reproducibility of artworks has fundamentally changed their nature and meaning. He writes, "The work of art in the age of mechanical reproduction is not a unique object, but an image without a referent, a copy without a model, a mass-produced commodity without a producer." Benjamin's argument challenges traditional notions of artistic value and originality. He argues that the technical reproducibility of artworks undermines their uniqueness and authenticity, making them interchangeable with other copies. This idea has been influential in art history and criticism, as it highlights the importance of context and historical significance in understanding artworks. Overall, Benjamin's essay is a thought-provoking exploration of the relationship between technology, art, and culture. Its ideas continue to be relevant today, as we grapple with issues related to digital media, mass production, and the role of technology in shaping our worldviews.

Test 3

- Model: Wizard v1.2

- Parameters: 13 billion

- File size: 6.86 GB

- Link: https://huggingface.co/WizardLM/WizardLM-13B-V1.2

"Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit", ein Essay von Walter Benjamin, untersucht die Auswirkungen der Technisierung auf das Verständnis und die Wertschätzung von Kunstwerken. Benjamin argumentiert, dass die Möglichkeit, Kunstwerke zu reproduzieren und zu verbreiten, sie in ihrer Bedeutung und ihrem Wert verändert hat. Er betont, dass die technische Reproduzierbarkeit das Original aus seiner einzigartigen Position als authentisches Objekt löst und es durch eine Vielzahl von Kopien ersetzt. Benjamin erklärt auch, wie diese Technisierung zu einer Veränderung der Beziehung zwischen dem Kunstwerk und seinem Publikum führt: Das Publikum kann nun nicht mehr direkt mit dem Original in Kontakt treten, sondern nur noch mit den Reproduktionen. Diese Entwicklung hat dazu geführt, dass die Wertschätzung von Kunstwerken sich von ihrer Authentizität zu ihrer Verfügbarkeit und Zugänglichkeit verlagert ist. Benjamin betont auch die Bedeutung der industriellen Massenproduktion für das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit, da sie dazu führt, dass das Kunstwerk in den Alltag integriert wird und seine Funktionen als Symbol und Träger von Werten verändert. Schließlich argumentiert Benjamin, dass die Technisierung der Kunst auch zu einer Veränderung des Konzepts der Originalität führen kann: Während das Original noch durch seine Authentizität definiert ist, können Reproduktionen eine neue Form von Originalität darstellen, indem sie das Produkt eines bestimmten Prozesses sind. Insgesamt zeigt Benjamin auf, wie die Technisierung und Industrialisierung der Gesellschaft die Wahrnehmung und Bewertung von Kunstwerken beeinflussen und wie diese Entwicklung zu einer Neuorientierung des Kunstverständnisses notwendig ist.

Test 4

- Model: Hermes

- Paramaters: 13 billion

- File size: 6.86 GB

- Link: https://huggingface.co/NousResearch/Nous-Hermes-13b

Walter Benjamin's essay "The Work of Art in the Age of Its Technical Reproducibility" explores the impact of technology on art and culture. In this work, Benjamin argues that the ability to reproduce works of art through various technological means has fundamentally changed their nature and value. He suggests that while technical reproduction may have democratized access to art, it has also stripped away its aura or authenticity. Additionally, Benjamin posits that the loss of this aura has led to a shift in our appreciation of art, from an object-based focus to one centered on the experience of viewing it. Overall, "The Work of Art in the Age of Its Technical Reproducibility" is a thought-provoking examination of how technology has transformed the world of art and culture.

Test 5 (‘Control group’)

Model: ChatGPT4

Walter Benjamins Essay "Das Kunstwerk im Zeitalter seiner technischen Reproduzierbarkeit" stellt eine grundlegende Auseinandersetzung mit den Veränderungen dar, die Kunst und Kultur durch die Möglichkeiten der technischen Reproduktion erfahren. Benjamin argumentiert, dass die massenhafte Verbreitung von Kunstwerken durch Techniken wie Fotografie und Film die traditionelle "Aura" des Kunstwerks, also seine Einzigartigkeit und Echtheit, zerstört. Diese Entauratisierung führt zu einer Demokratisierung der Kunst, indem sie einem breiteren Publikum zugänglich gemacht wird und somit die klassischen Grenzen zwischen Künstler und Betrachter aufweicht. Benjamin sieht in der technischen Reproduzierbarkeit nicht nur eine Bedrohung für die traditionelle Kunst, sondern auch ein emanzipatorisches Potential. Er betont die politischen Implikationen, die sich aus der Veränderung der Wahrnehmung und der Partizipation des Publikums ergeben. So kann die Kunst im Zeitalter ihrer technischen Reproduzierbarkeit zum Werkzeug der Aufklärung und der sozialen Veränderung werden. Der Essay untersucht weiterhin die Unterschiede zwischen der Rezeption von traditioneller Kunst und der von massenmedial verbreiteter Kunst. Während das Betrachten eines Gemäldes in einem Museum eine kontemplative Erfahrung darstellt, ist die Rezeption eines Films durch seine dynamische Natur und die kollektive Erfahrung im Kino charakterisiert. Diese Veränderungen in der Rezeptionsweise beeinflussen auch die Rolle des Künstlers, der zunehmend zum Produzenten im Kontext der Kulturindustrie wird. Benjamin stellt auch die These auf, dass die technische Reproduzierbarkeit die Kunst von ihrer traditionellen Bindung an rituelle Kontexte löst. Früher war Kunst oft ein Teil religiöser oder zeremonieller Praktiken und diente bestimmten kultischen Zwecken. Durch die Reproduzierbarkeit wird Kunst autonom und kann für verschiedenste Zwecke eingesetzt werden, was sowohl Chancen als auch Risiken birgt. Abschließend reflektiert Benjamin über die Auswirkungen der technischen Reproduzierbarkeit auf die Ästhetik und die Wahrnehmung. Er betont, dass sich mit der Verbreitung der Reproduktionstechniken auch die sensorische Erfahrung des Menschen und sein Verständnis von Kunst und Kultur grundlegend wandeln. Diese Transformation erfordert eine kritische Auseinandersetzung mit den neuen Medien und ihren Auswirkungen auf die Gesellschaft.

Footnotes

- Thanks to the great work of the guys at wikisource I was able to use the third version of the essay (authorized by Benjamin in 1939). The source can be accessed here. Reference: Walter Benjamin – Gesammelte Schriften Band I, Teil 2, Suhrkamp, Frankfurt am Main 1980, pp. 471–508. ↩︎

- A great list that explains spaCy’s POS abbreviations (POS = part of speech) can be found here. ↩︎